My GEO process (if I ever did one)

1 – Audit the current state of visibility

- Check if, outside of RAG’s systems, LLMs know my brand.

- Analyze the frequent terms that come up when I ask for a description of my brand

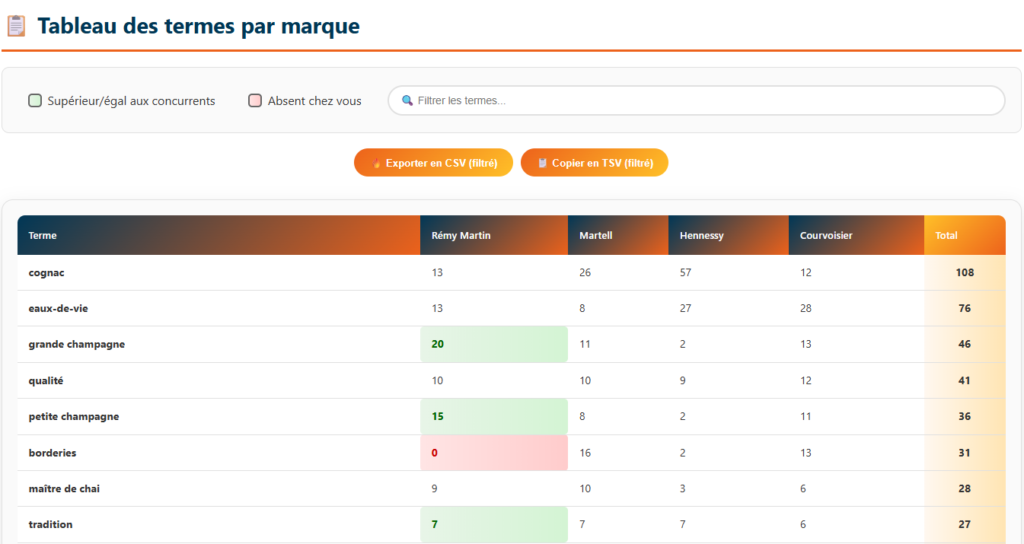

- Analyze the gap in frequent terms vs. competitors to spot strengths/weaknesses and figure out the right communication angle. I’ve got a tool that does this automatically as the previous steps.

- Run these frequent term tests not only on the latest models but also on older ones and keep track to monitor evolution

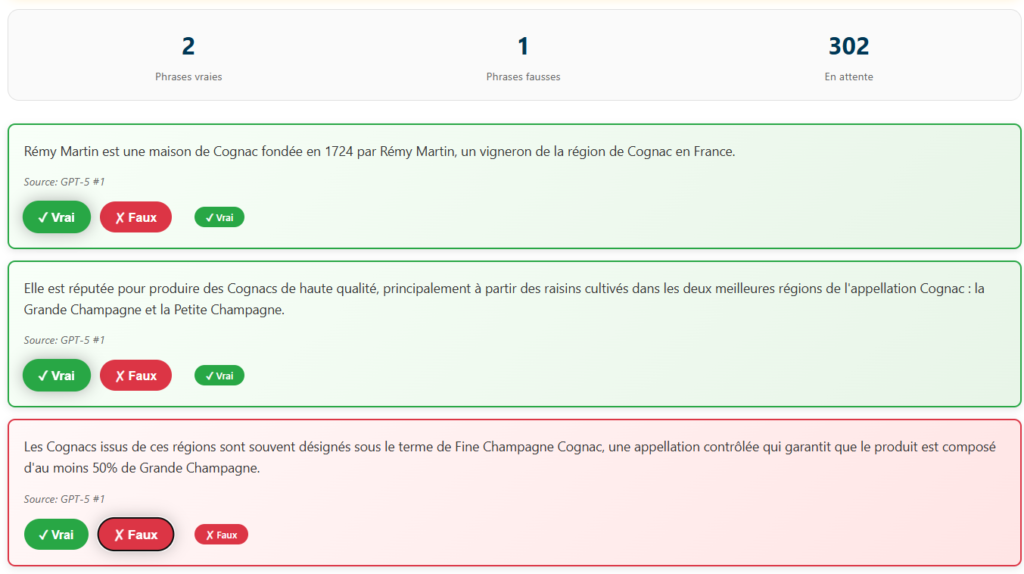

- Analyze the generated phrases used to describe my brand and list the inaccurate ones to know the kind of mistakes my persona might see about my brand (same here, I’ve got a tool to help with that)

- Check if Common Crawl has recently added my site to its database (through their site : https://index.commoncrawl.org/)

- Generate a top 10/15 list of the most likely brands LLMs would suggest for a given product/service with an estimated probability % (don’t have a tool for this yet, but it’s in my Todo List lol)

2 – Technical Audit

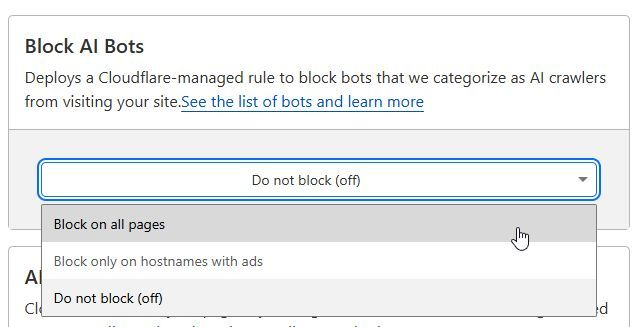

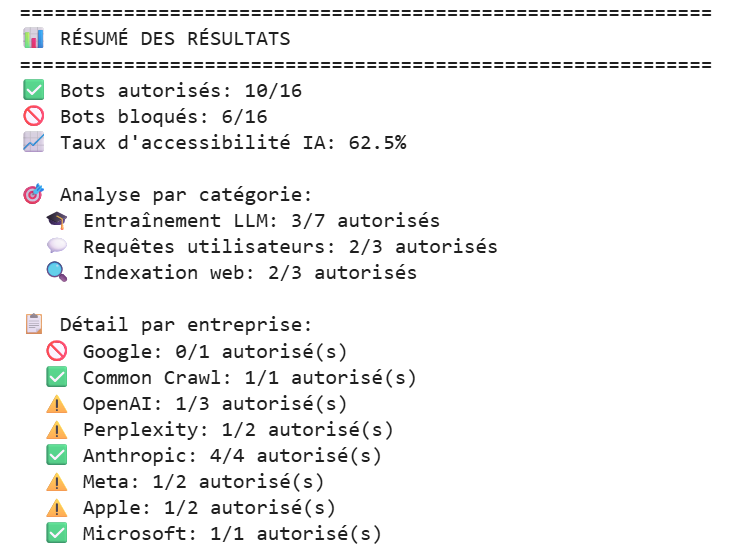

- Are AI crawlers actually able to access my site, or are they getting blocked by something like robots.txt or a firewall ? I’ve got a tool that automatically checks this against all the major LLM’s crawlers user agents. If you want me to test a site for you, just hit me up (LinkedIn works fine).

- If you’re using Cloudflare, take a look at your setting as we know that they actually block ai bots by default. Go to Log in → Security → Bots → Block AI Bots.

- Run a task with an AI agent (like filling out a form or adding a product to the cart) to make sure it can actually complete the action. AI agents browsing the web on our behalf are already in development, and they might well be part of the future of the internet, so this isn’t something to ignore.

- Make sure there are no spider traps on your website (especially if you run an e-commerce site). This issue can occur quite often when filters aren’t working properly.A spider trap happens when a broken link leads to another broken link, which leads to another bugged page, and so on. The crawler ends up stuck in an endless loop of useless, broken, or error pages. You need to be extremely careful about this, because, as Kevin Lesieutre demonstrated in his excellent conference (which I highly recommend), he presented a case study of an e-commerce site where the crawler encountered a spider trap and eventually stopped crawling the website altogether. After repeatedly hitting broken pages, the crawler concluded that the site wasn’t worth crawling and considered it low quality. So, it’s definitely worth running a crawl beforehand to make sure there are no spider traps. And if you’re managing an e-commerce website, be especially vigilant about the filters on your product listing pages.

- Some chatbots, like ChatGPT, don’t handle JavaScript rendering, so make sure your content is accessible without JavaScript. To test this, don’t hesitate to ask ChatGPT to summarize your page or ask it questions about the content to see what it’s able to understand.

3 – Map Out Query Fan Outs

- Bulk collect as many search needs of the persona as possible (via PAA, Reddit questions, keywords, etc.).

- Turn those search needs into prompts, making personalized variations with my brand’s personas.

- With your list of keywords and also the different personas you’ve found, discover all the prompts potentially typed by your users by using the following prompt on the LLM of your choice:

If I was [your persona] trying to find [your keywords or questions] what might I ask?

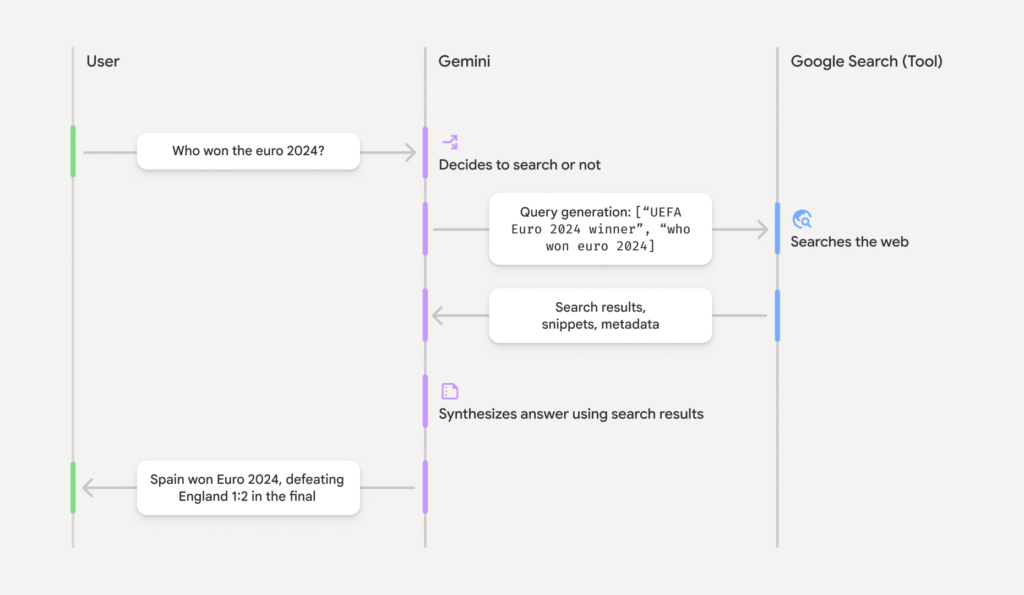

- Run all those prompts multiple times on AI Mode / Gemini / ChatGPT to grab the query fan outs in bulk, then cluster them (you can use dataforseo to get QFO from chat gpt in bulk). Finally, list out the query fan outs where I’m not ranking in the top 10 on Google / Bing — that becomes a content roadmap. (I’ve got a tool to pull query fan outs from Gemini and ChatGPT, check out my tutorials posted on Linkedin to try them.

Btw some prompts will not recquire the chatbot to generate Query Fan Outs. So try to clean your list of prompts before checking their query fan outs.

Does your prompt générate query fan out ? Here’s how to check :- For Chat GPT : Try the open ai grounding tool made by dejan

- For Ai Mode / Ai overview / Gemini : Try the Google Grounding Api (if you dont get any query as an output it mean that your prompt doesnt recquire query fan out).

4 – Content Creation

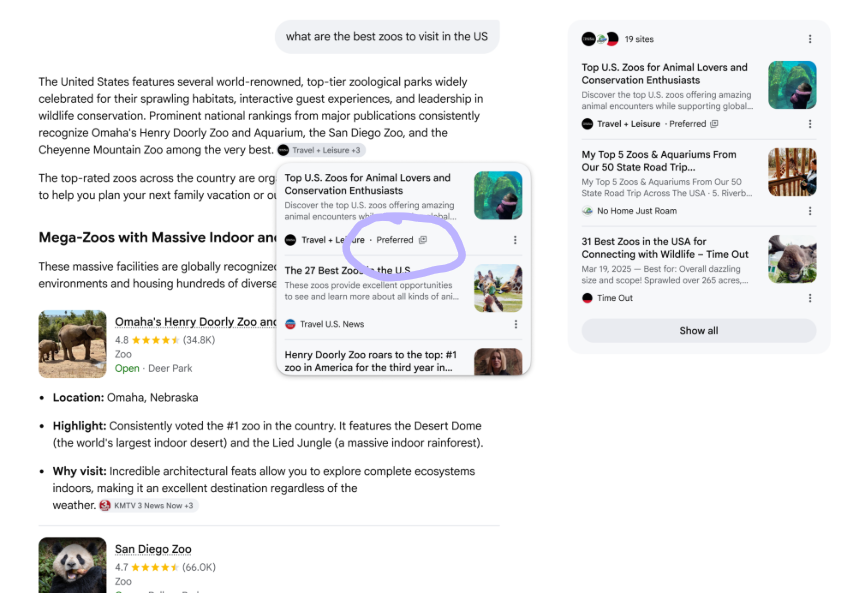

- A classic move: make sure your brand shows up in as many ranking / “top” / “best X to do Y” list articles as possible.

- From my list of query fan-outs (but only the ones I know generate outputs mentioning brands), I check the rankings on Google (and maybe also Bing, which I don’t think should be written off), and I reach out to the sites that are already ranking to ask them to add a mention of my brand. Having done this before, I know it’s very time-consuming, so we reserve this action for the query fan-outs we consider highly prioritized or lucrative.

- If some query fan-outs are topics that can be covered on my site, then create one page per prompt (that page will be optimized for all the query fan-outs generated by that prompt).

- Sometimes, some prompts will generate query fan-outs in English, even if the language of my site or the prompt itself is different. In that case, you’ll need to either create external content on English-language websites or develop an English version of the site to target those English query fan-outs.

📄 On American English specifically: A study published in April 2025 (arXiv) tested GPT-4, Llama, Claude and Gemini by analyzing training data composition, tokenizer efficiency and generative behavior. The finding: these models show a structural bias toward American English — it dominates pre-training corpora and is processed more efficiently at the tokenizer level. If you’re creating English content to capture LLM visibility, writing in American English (not just neutral English) may give you a small but real edge.

- I’d especially focus on BOFU content. For example, an article like “My review of site X: is it trustworthy?” ranks easily on Google/Bing and also gets pulled by ChatGPT when someone asks about the reliability of an e-commerce site before buying. I’ve tested it : it works well, and I think AI can serve as the final step for the persona before conversion. So you might as well control the narrative. (Edit 30/03/26) : It doesn’t work anymore, ChatGPT has become very suspicious about marketing listicles and is trying to rely only on trustworthy providers.

- Keep pushing a quality content strategy that covers the entire topic. If your pages aren’t more “valuable” than basic AI summaries, then honestly your site has no reason to exist. We actually published an article with Paul Grillet that breaks down our process and method to create outstanding content.

- If you’re running an e-commerce business, make sure your product feed and visibility on Google Shopping are up to date and working properly. Indeed, we know that ChatGPT relies on Google Shopping to power its own ChatGPT Shopping display.

- If your running an e-commerce, the way your writing your product page’s descriptions are also important, here’s a breakdown of an arxiv paper that explain how to do it.

- I’ll try to create as many fake Wikipedia pages as I can, Chat GPT seems to regulary crawl for Wikipedia pages, and remember their content when they need to build an answer with sources even if the Wikipedia page have been deleted for weeks (obviously that kind of page will be fastly deleted by moderators) :

Bonus (May 2026): Preferred Sources Reach AI Mode

As of May 31, 2026, Google is testing the surfacing of users’ Preferred Sources directly inside AI Mode and AI Overviews, not just the News tab anymore.

Until now this was a media-only play, mainly useful to land in Google News carousels. With the test bleeding into the AI surfaces, any site that publishes regular informational content has a reason to chase it: readers explicitly tagging you as a trusted source is a hard signal to fake, and Google looks like it is starting to weight it.

Setup is trivial. The opt-in URL pattern is:

https://www.google.com/preferences/source?q=YOUR-DOMAIN.comStick it on a visible button. On this blog I shipped two CTAs: one in the header menu, one auto-injected at the top and bottom of every post (you have probably seen them already). The user clicks, lands on Google’s Preferences page with the domain pre-filled, two taps and you are in their preferred set.

Who should ship this: not only news sites. If you publish regular long-form content (blogs, deep-dives, tutorials, reviews), it is a clean signal play. Cheap to set up, basically free upside.

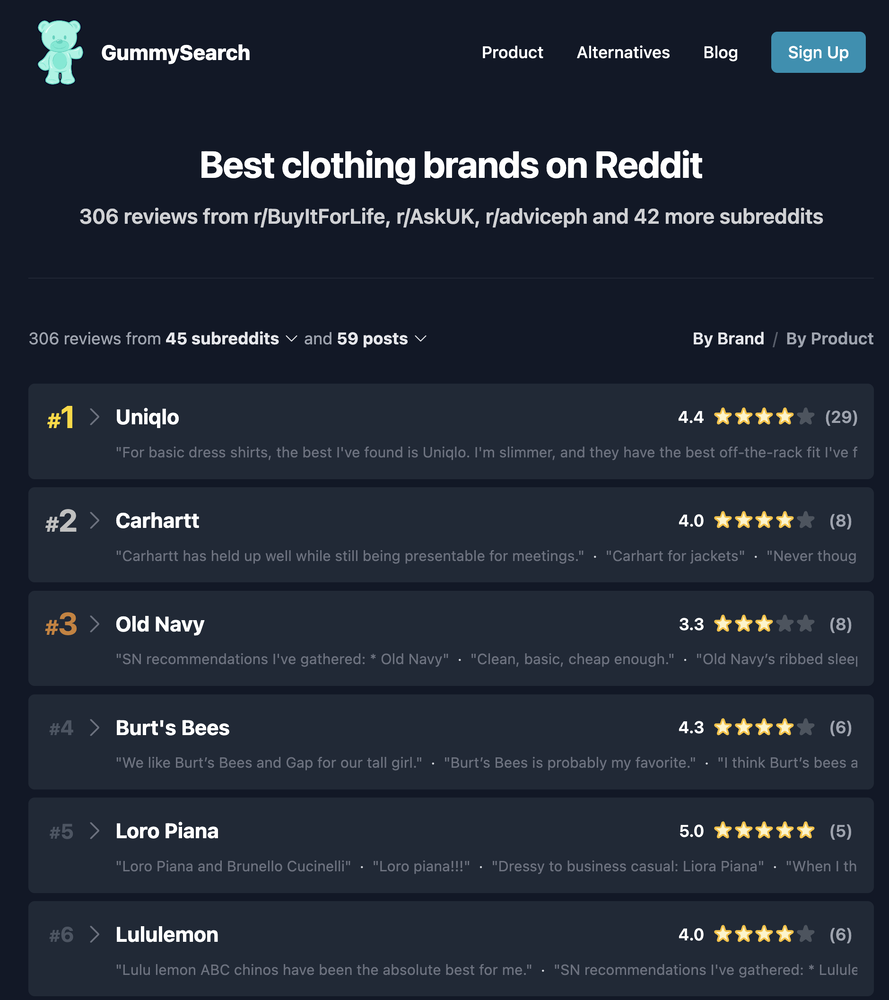

Tactic to Test (June 2026): Recycle Reddit Reviews on Your Site

Quick caveat: I have not tested this one myself yet. I am writing it down here because it is clever enough that I want to try it on a client soon.

The pattern, spotted by Malte Landwehr (CPO & CMO at Peec AI) in this LinkedIn post: when ChatGPT fans out queries to ground its answers, especially when it is hunting for user opinions, it often appends “reddit” directly to the query. So the obvious play is to give it a page that looks exactly like the kind of Reddit thread it was about to fetch.

How to apply it: create a page on your site titled “{Brand or Product} reddit reviews” and quote positive statements about your brand or product taken from real Reddit threads. According to Malte, these pages have a strong chance of being picked up by ChatGPT’s grounding process and surface as citations.

Three flavours, decreasing in cleanliness: pure recycling of real Reddit reviews (the safe play), hand-picked positive ones only (still defensible), or fabricated quotes engineered to look like Reddit comments (grey hat, do not do it unless you enjoy reputational risk).

Worth a shot if your AI visibility audit shows fan-out queries loaded with “reddit” mentions, or if you have noticed ChatGPT systematically pulling Reddit threads when asked about your category.

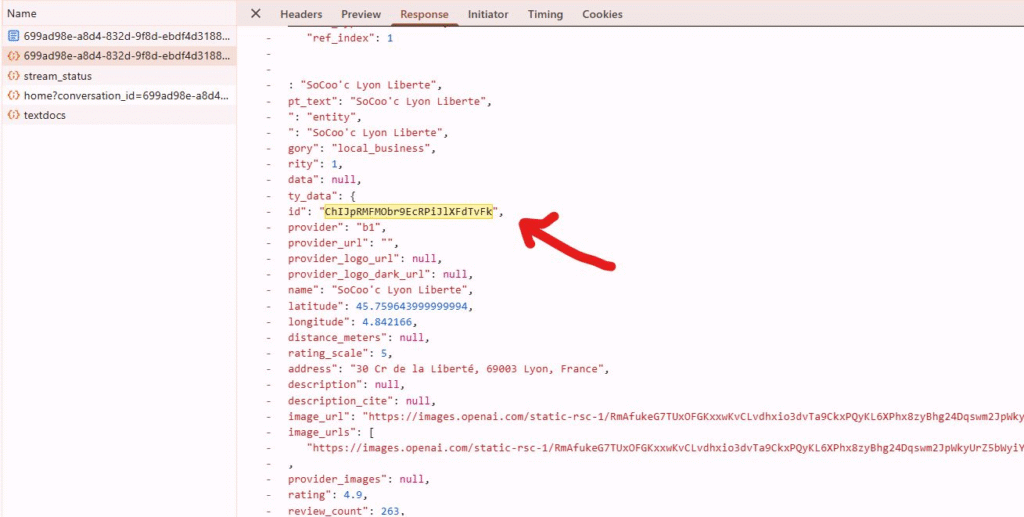

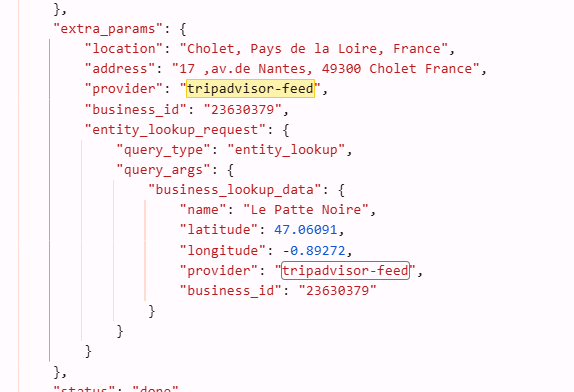

5 – Local GEO

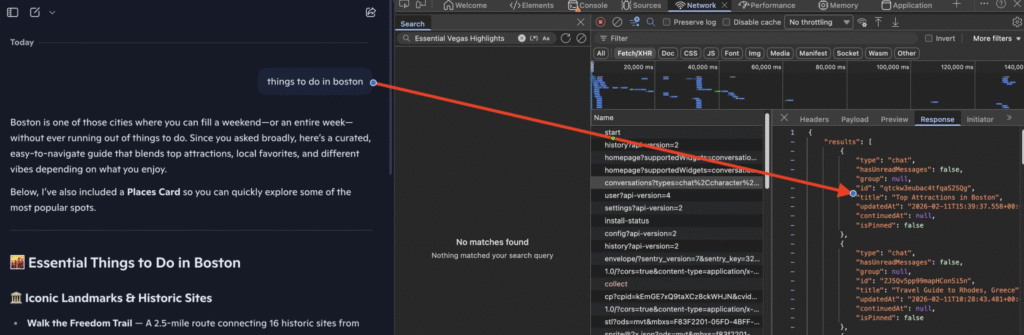

If local visibility in GEO is something you care about, here’s a reminder worth keeping in mind: ChatGPT still relies on the Google Maps API for its local map results. How do we know? In the JSON response code on the ChatGPT interface, the local entity IDs match the Google Maps API place ID format, confirming Google Maps remains the underlying data source.

6 – Measure

- Check the AI logs (especially the ones triggered by a user request) to get an idea of how many times your content is being pulled as a source, and which content specifically. I’ve got a Linkedin post listing all the logs worth tracking.

For the full server log analysis playbook (Googlebot validation, fake bots, crawl waste, the 130-day rule, internal linking heatmaps and the eight patterns you can only see in logs), I just published a complete field guide. Read the complete log analysis guide for SEO and GEO →

- Here are the interesting and actionable insights you can uncover by analyzing the AI logs collected on your server (I stole them from Jerome Salomon) :

- Check for 499 errors in the logs: that’s when ChatGPT decides to cut its visit short because your site took too long to load (so yeah, time to revisit TTFB or other webperf issues).

- Even if it’s marginal, keep an eye on traffic from AI chatbots by adding this regex into your analytics tool.

^.*ai|.*\.openai.*|.*copilot.*|.*chatgpt.*|.*gemini.*|.*gpt.*|.*neeva.*|.*writesonic.*|.*nimble.*|.*outrider.*|.*perplexity.*|.*google.*bard.*|.*bard.*google.*|.*bard.*|.*edgeservices.*|.*astastic.*|.*copy.ai.*|.*bnngpt.*|.*gemini.*google.*$At the end of the day, you’ve got to understand that GEO is way more about branding than traffic acquisition. The goal is to get your brand mentioned, not to expect people to click through to your site (they basically never do).

So apart from monitoring direct traffic or branded searches in search engines (which could just as well come from your overall comms efforts), it’s basically impossible to measure the direct business impact of your GEO strategy.Research Paper: “Don’t Measure Once” — A Concrete Protocol for Tracking GEO Visibility

📄 Source: “Don’t Measure Once: Measuring Visibility in AI Search” — Schulte, Bleeker & Kaufmann (April 2025, arXiv).

- Run the same prompt at least 3 to 5 times per session. Unlike Google where position 3 stays position 3 for a while, an LLM can cite completely different sources from one run to the next. A single check tells you nothing about your actual visibility.

- Create 3+ variations of each prompt you’re tracking. Rephrase the same intent differently (e.g., “best CRM for startups” / “which CRM should a startup use” / “recommend a CRM tool for a small team”). This is how real users search — and each variation can trigger different sources.

- Track over a minimum 7-day window before drawing any conclusion. The researchers found that results can shift significantly day to day. A brand appearing 4 out of 5 times on Monday might show up 1 out of 5 on Thursday. You need at least a week of data to spot real trends vs. noise.

- Measure overlap between runs, not just “did I appear.” The study uses Jaccard similarity to compare result sets: out of all the sources mentioned across two runs of the same prompt, what % are the same? If you get 80%+ overlap, the results are stable. Below 50%, the output is volatile and you’ll need more data points to trust any conclusion.

- Don’t trust a single “position” in an AI answer. A result that says “you were cited 2nd” is meaningless on its own. Track your appearance rate instead: out of 20 runs this week, how many times were you mentioned? That ratio is your real GEO visibility metric.

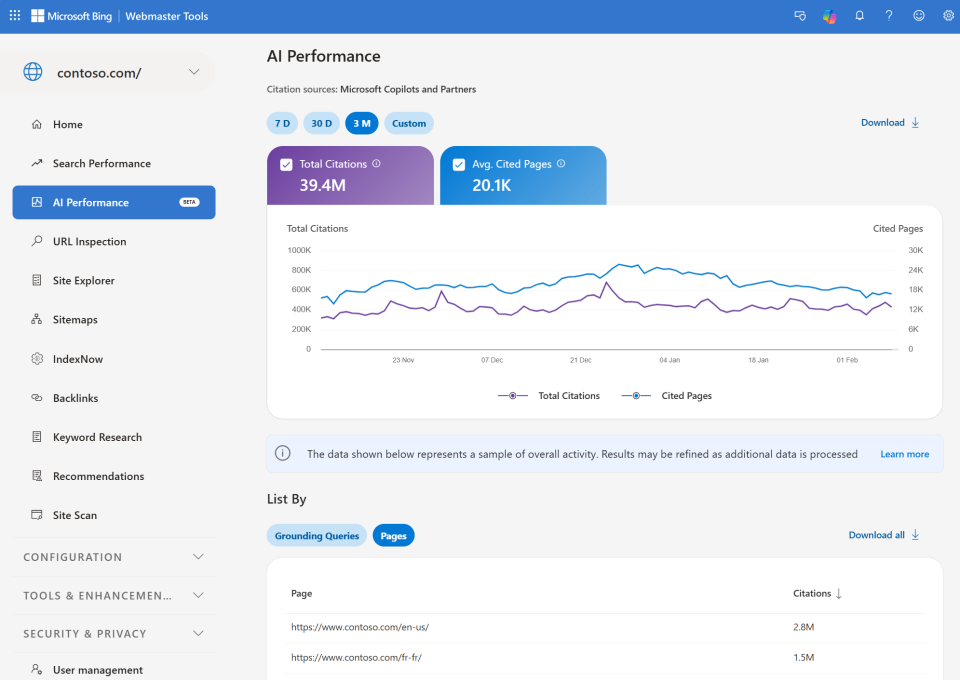

Bing Webmaster Tools Just Launched AI Performance Reporting

- Pages cited in AI answers – Which URLs are referenced most frequently

- Average cited pages – Daily unique pages cited during your selected timeframe

- Grounding queries – Key phrases the AI used when retrieving your content

- Citation trends over time – 7 days, 30 days, 3 months, or custom ranges

The “grounding queries” shown are NOT the actual user prompts. They’re labels assigned to prompts by Bing’s system – grouped, generalized phrases summarizing citation activity. Jean-Christophe Chouinard on linkedin